Anthropic's AI Breakthrough Sparks White House Security Talks

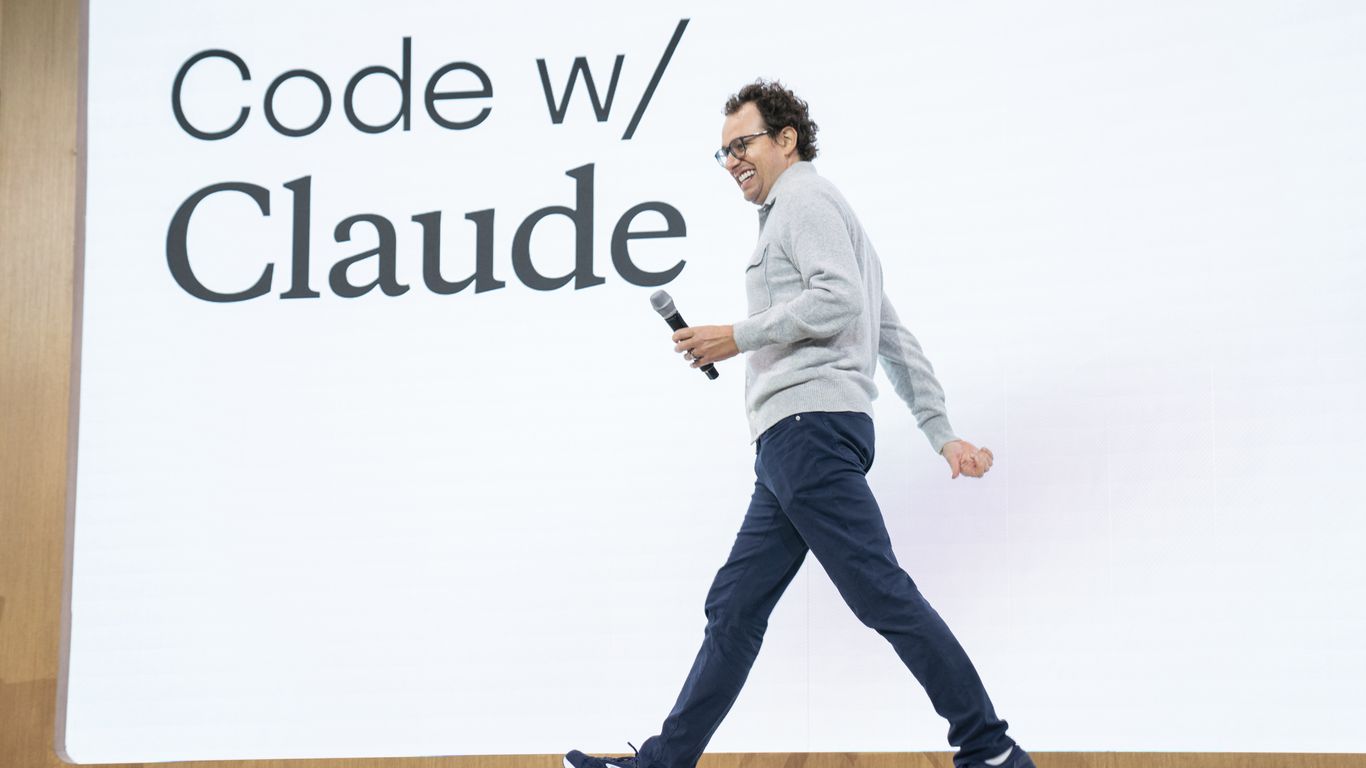

Anthropic CEO Dario Amodei is scheduled to meet with White House Chief of Staff Susie Wiles on Friday, April 19, 2026, in what represents a critical breakthrough in ongoing tensions between the AI company and the Pentagon over its powerful new Claude model, codenamed Mythos. The meeting comes as the Trump administration grapples with balancing AI innovation against unprecedented cybersecurity concerns raised by the model's sophisticated capabilities.

The Mythos Model: A Game-Changing AI with Dual-Edge Potential

Claude Mythos represents a significant leap forward in artificial intelligence capabilities, particularly in the realm of cybersecurity analysis and defense penetration testing. According to industry sources, the model demonstrates an unprecedented ability to identify and potentially exploit vulnerabilities in digital infrastructure, making it both a powerful defensive tool and a concerning offensive capability.

The AI model's sophistication lies in its ability to understand complex system architectures and identify security weaknesses that human analysts might miss. This capability has attracted both praise from cybersecurity professionals who see its defensive potential and concern from government officials worried about its possible misuse.

"The technical capabilities we're seeing with Mythos are unlike anything we've encountered before," said a senior administration official who requested anonymity. "It's simultaneously our greatest opportunity and our biggest challenge in AI governance."

The model's development comes at a time when cybersecurity threats are at an all-time high, with nation-state actors and criminal organizations increasingly sophisticated in their attacks on critical infrastructure. Mythos could theoretically serve as either a shield against such attacks or, in the wrong hands, a weapon to enable them.

Pentagon Tensions and National Security Implications

The conflict between Anthropic and the Pentagon centers on questions of access, oversight, and control over AI technologies with national security implications. Defense officials have reportedly expressed concerns about allowing such powerful cybersecurity capabilities to remain solely under private sector control, while Anthropic has pushed back against what it views as government overreach.

The tension reflects broader questions about the role of private AI companies in national security and the extent to which the government should have access to or control over cutting-edge AI technologies. This debate has intensified as AI capabilities have grown more sophisticated and their potential applications in warfare and intelligence have become clearer.

Sources familiar with the discussions indicate that the Pentagon has sought greater transparency into Mythos's capabilities and potentially some level of oversight over its deployment. Anthropic, meanwhile, has maintained that excessive government interference could stifle innovation and compromise the company's ability to develop beneficial AI technologies.

The Friday meeting represents the highest-level engagement between the company and the administration to date, suggesting both sides recognize the need to find a workable compromise. The involvement of Chief of Staff Wiles indicates the issue has reached the highest levels of government attention.

AI Governance at a Crossroads

This high-stakes meeting occurs against the backdrop of an evolving global landscape for AI regulation and governance. The Trump administration has taken a more hands-off approach to tech regulation compared to some international counterparts, but the national security implications of advanced AI have forced a more nuanced position.

The administration faces pressure from multiple directions: defense hawks calling for greater government control over AI technologies with military applications, tech industry leaders advocating for innovation-friendly policies, and cybersecurity experts warning about the risks of both over-regulation and under-regulation.

Recent cyber attacks on critical infrastructure have heightened awareness of cybersecurity vulnerabilities and the potential role AI could play in both creating and solving security challenges. The Colonial Pipeline attack of 2021 and subsequent incidents have demonstrated the real-world consequences of cybersecurity failures, making the stakes of the Mythos discussion particularly high.

International competitors, particularly China, have made significant investments in AI capabilities with potential military applications. This competitive dynamic adds urgency to finding the right balance between oversight and innovation in American AI development.

Industry Context and Expert Analysis

The Anthropic-Pentagon tension reflects broader challenges facing the AI industry as models become more capable and their potential applications more far-reaching. Other major AI companies, including OpenAI and Google's DeepMind, have faced similar questions about the national security implications of their technologies.

"We're entering uncharted territory where the line between civilian and military AI applications is increasingly blurred," said Dr. Sarah Chen, a former NSA analyst now working as an independent AI policy consultant. "The Mythos situation is a test case for how we'll handle these issues going forward."

Industry observers note that the outcome of these discussions could set important precedents for how the government engages with AI companies on national security matters. A heavy-handed approach could drive innovation overseas, while insufficient oversight could leave critical vulnerabilities unaddressed.

The timing of the meeting is also significant, coming just months before the 2026 midterm elections. Both parties have made AI policy a key issue, though they differ significantly on the appropriate level of government involvement in regulating and overseeing AI development.

What's Next: Implications for AI Development and Policy

The outcome of Friday's meeting could significantly influence the trajectory of AI policy in the United States and potentially globally. Success in finding a compromise could establish a framework for future government-industry collaboration on sensitive AI technologies, while failure could lead to more adversarial relationships.

Key issues likely to be discussed include data sharing protocols, security clearance requirements for AI researchers, export controls on AI technologies, and the establishment of oversight mechanisms that balance security concerns with innovation needs.

The resolution of this conflict will be closely watched by other AI companies, international allies, and competitors. A successful framework could become a model for other nations grappling with similar challenges, while a breakdown could accelerate the fragmentation of global AI governance approaches.

For more tech news, visit our news section.

As AI technologies become increasingly integrated into our daily lives and work environments, the outcomes of high-level policy discussions like these will directly impact how we can leverage these tools for health optimization, productivity enhancement, and personal development. The balance struck between innovation and security will determine whether breakthrough AI capabilities reach individuals and organizations working to improve human performance and wellbeing. Join the Moccet waitlist to stay ahead of the curve as these transformative technologies evolve.